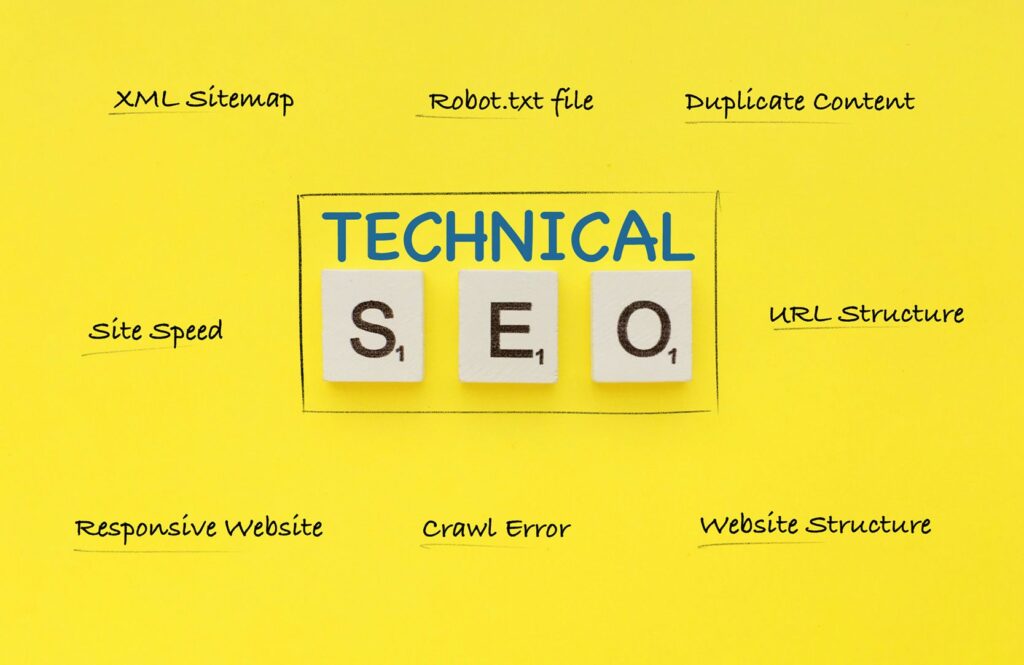

Having a website is essential for any business, but if that website isn’t crawlable and indexable it won’t be visible to search engines. Technical SEO makes sure your site can be crawled and indexed so customers can find you online.

It’s not enough to just create content; the underlying technical structure of your website must also be optimized for search engine visibility. This article will discuss what technical SEO is, why it matters, and how you can ensure your site is set up correctly for maximum visibility on the web.

Ensuring Proper Crawl Accessibility

Ensuring proper crawl accessibility is critical to the success of any website. It allows search engines to access, read, and index your content so that it can appear in SERPs (Search Engine Results Pages).

Good crawl accessibility ensures that all parts of your site are accessible by bots created by search engine companies like Google, Bing, or Yahoo. When you’re setting up a website for SEO purposes, you should make sure that every page is properly linked from other pages on the same domain as well as external links.

Additionally, create an XML sitemap that outlines all URLs on the domain to ensure maximum crawler coverage. This will help inform search engine crawlers about what content exists on the site and how they should access it.

In addition to crawling access being important for discoverability within SERPs, creating an effective internal linking structure will also improve the user experience when navigating through different pages of a website. Make sure every page has at least one link pointing towards it from another part of the website; this helps both visitors and search engine bots understand where each page fits into your overall information architecture.

Following these guidelines helps ensure proper crawl accessibility which leads to better visibility in organic results across major search engines – leading ultimately to more traffic directed towards your digital properties!

Structuring and Optimizing URLs

Structuring and Optimizing URLs is an important step when it comes to Technical SEO. Ensuring your website’s URLs are optimized for search engines can help you increase visibility, improve rankings, and ensure visitors easily find what they’re looking for.

Properly structured URLs make it easier for search engine crawlers to understand the content of a page or post, as well as help users quickly identify where they are on your site. When structuring and optimizing your URLs, some key elements should be taken into account such as: using descriptive words in the URL; keeping them short yet meaningful; avoiding duplicate content by ensuring each page has its unique URL; utilizing hyphens instead of underscores or other special characters (like %20); adding keywords strategically; including categories within the structure whenever possible; creating 301 redirects from old pages to new pages if necessary, etc.

Making sure all these elements are taken care of will help you reap the benefits of better crawlability and indexation while also improving user experience.

Creating Sitemaps and Submitting to Search Engines

Creating a sitemap and submitting it to search engines is an essential part of technical SEO. It allows search engine bots to crawl your website, determine where pages are located, and how each page relates to others on the site.

This helps the search engine understand which pages should be indexed in their results. To create a proper sitemap, you will need to include all of your URLs, as well as other relevant information such as when it was last updated or changed.

This information can help ensure that the most up-to-date version of each page is crawled and indexed by the search engine bot. Once this is completed, you then need to submit your sitemaps directly into Google Search Console and/or Bing Webmaster Tools so that they can be recognized by the respective search engine algorithms for crawling and indexing purposes. For best results concerning technical SEO implementation, make sure that any new content created has been added to your sitemaps before submission – this can save time spent waiting for crawls from both Google & Bing respectively.

Additionally, because there may be changes made within websites periodically (such as new URL redirects or rewrites), updating existing sitemaps regularly should also be considered part of good practice when managing Technical SEO campaigns going forward.

Utilizing Robot Exclusion Protocols

Robot Exclusion Protocols (REP) are an important part of Technical SEO, as they help to make sure that a website is crawlable and indexable. This protocol helps search engines identify which parts of the website should be ignored or blocked from being crawled and indexed.

Utilizing REP can save time and resources in the long run, while making sure that only relevant content is available for search engine crawlers. Additionally, it also allows webmasters to customize their websites with specific instructions for each type of robot visiting the site.

By setting up these protocols correctly, webmasters have more control over what content is visible on their website and how it is indexed by search engines. Furthermore, implementing REP will ensure that all changes made to a website are reflected quickly in Google’s indexing system; this means less time spent waiting for pages to be updated after modifications have been made.

With Robot Exclusion Protocols properly implemented on your site, you can rest assured knowing that your SEO efforts won’t go unnoticed!

Checking for Broken Links and Errors

Checking for broken links and errors is an important step in the technical SEO process. It helps to ensure a website is crawlable and indexable by search engines.

Doing this check can help to identify potential issues that may be impacting a website’s visibility, ranking, or usability. Some of the things you should look out for include URLs that don’t lead anywhere, missing images or content elements, incorrect redirects, coding issues such as HTML validation errors, and internal server errors (500). These are just some of the problems that could prevent a website from being indexed properly by search engine bots – leading to poor rankings or even exclusion from results pages altogether.

It’s also important to test loading times – if your page takes too long to load then it will impact user experience negatively and could result in decreased engagement levels with visitors who click away before they get a chance to see what’s on offer. Checking regularly for any potential issues with loading speeds should therefore be part of any technical SEO audit process.

In addition, conducting regular tests on different devices is highly recommended to make sure everything works correctly across all platforms including mobile phones and tablets – something many people forget about when running their site audit! Such checks can reveal additional weaknesses that need rectifying before serious damage is done through bad user experiences resulting in lost customers and revenue opportunities alike.

Conclusion

Technical SEO is an important component of any website. It ensures that your website is crawlable and indexable so that search engines can quickly identify, assess, and rank it.

For independent escorts, this means that SEO plays a vital role in helping to create visibility for their services on the internet. By taking the time to optimize their websites through technical SEO tactics such as proper header tag usage and optimized URLs, independent escorts can ensure that they get found by potential customers more easily than ever before.

With effective technical SEO practices implemented correctly, SEO for independent escorts will have a much better chance of appearing at the top of search engine results pages for relevant queries.